A human approach to crafting an AI policy

With AI progressing at an unstoppable rate, the issues and opportunities that come along with its use are just as relentless. Any company using generative AI in any aspect of its business is finding itself with questions, both internally and externally, which need to be answered. Questions that beg defining the company’s perspective on these issues, what they think about AI and what kind of thinking will drive their approach to AI as it continues to explode.

Enter the AI policy.

Inviting collaboration

When it came time to craft an AI policy, McGuffin’s leadership team didn’t want it to be a top-down dictate. Given the scope and importance of the topic, they believed it should involve the whole company.

“McGuffin is just not like ‘AND FROM ABOVE!’,” Meg Eulberg, McGuffin’s Director of Operations, says.

Generative AI is moving quickly and touches nearly every aspect of agency work — from design and research to workflow and strategy. For McGuffin, that breadth made it clear that the most effective policy would come from engaging the whole company, because these issues affect the whole company.

This approach reflects McGuffin’s collaborative culture, where ideas often emerge from dialogue rather than hierarchy. By involving people across the company — from designers to strategists to account teams — the policy could incorporate diverse perspectives and practical insights from the people using these tools every day.

Conversations about AI were already happening across the organization, and clients were beginning to ask how McGuffin planned to approach the technology. The company needed a unified perspective before those conversations could happen externally.

President and Creative Director Chris Sculles describes how the leadership team approached the endeavor: “We didn’t try to come up with all the answers ourselves. We identified the core issues and threw it out to the team.”

First, leadership identified several key areas the policy would need to address, including:

- content and copywriting

- concepting and visual design

- client communications

- innovation

- ethical considerations

- environmental impact

- security

- operational workflows

The company formed committees around each topic and asked employees to volunteer for the areas they were most passionate about. By joining the committees that interested them most, discussions sprang from genuine curiosity and personal values. The result surprised even leadership.

“I knew the work would be good,” Meg said. “But what they came back with was so smart and thoughtful and right on the money.”

A sense of ownership

Opening the process to the entire company produces more than just good ideas — it strengthens collective ownership of the policy itself.

Employees brought forward questions leadership had not yet considered, such as how to balance efficiency with environmental concerns. Some team members were eager early adopters of AI tools, while others approached the technology with caution.

“We have people who are early adopters,” Chris said. “And we have people who are very cautious about it. That’s great, because you get multiple points of view.”

That diversity helped the teams reach a balanced perspective. The goal was not to reject AI nor to adopt it blindly, but to define how it could be used responsibly and effectively within McGuffin’s creative and strategic work. The collaborative format also reflects the company’s broader culture.

“If this had come from up high — ‘Here are the seven bullets you must follow’ — people wouldn’t care,” Chris noted. “But when everyone contributes, everyone owns it.”

In many ways, the process itself became a demonstration of stated company values — an exercise in shared decision-making, open discussion and respectful challenge. The principles of respect, collaboration and thoughtful problem-solving were evident in every section.

During internal readouts of the committee work, employees asked probing questions and debated ideas freely, but always with a shared sense of respect and purpose.

“People aren’t afraid to challenge each other here,” Meg Eulberg said, “but everyone respects the work and the people doing it.”

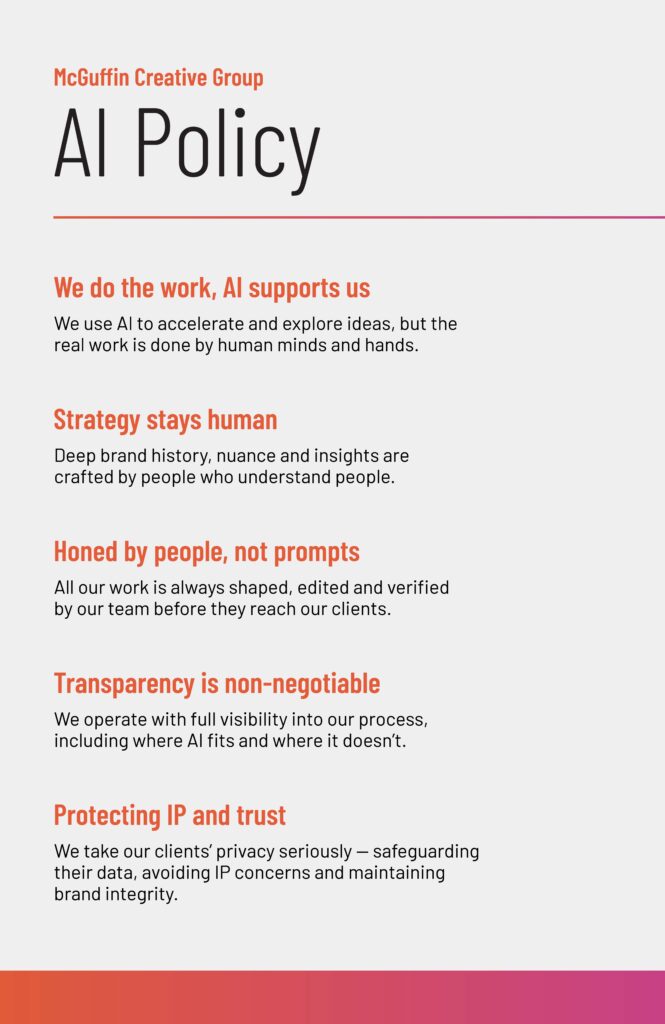

Supporting, not replacing, human creativity

Part of the discussions was to consider the role AI should play within the agency.

“It should always be a tool to help us reach a goal or solve a business problem,” Chris Sculles said. “We should still be the designers, the thinkers, the leaders of it.”

That means using AI to automate repetitive or time-consuming tasks while preserving the human insight that drives strong creative and strategic work. For example, tools that help analyze large amounts of data or streamline production processes can free up teams to focus on the ideas and storytelling that clients truly value.

Some of the ways team members are currently using AI include visualizing novel concepts for clients, doing efficient deep-dives and data evaluation for strategy. and creating agents to evaluate copy against existing brand style guidelines.

A living document

The pace of change in AI is extraordinary — far faster and more pervasive than previous technological shifts such as the rise of the internet. A platform or capability that didn’t exist last month may become essential in the near future. For that reason, the policy is designed as a living document.

Future phases of the initiative will focus on knowledge sharing, training and experimentation, ensuring that the entire team can learn from each other as new tools emerge. Ultimately, the goal is both simple and ambitious: to establish a clear point of view that guides McGuffin’s work and can be communicated simply to clients.

“If someone asks how we approach AI,” Chris Sculles said, “we want an answer that is memorable and repeatable.”

Tips for creating your own AI policy

While we’re not experts, this experience has taught us quite a bit about embracing new technologies together and creating flexible boundaries around how to use them. Here are some of our tips for creating your own AI policy with your team:

- Involve the whole team: Divide and conquer. It’s an enormous topic. Create categories of concern and then invite team members to brainstorm together on that topic. For larger enterprises where 100% participation is not practical, ensure representation across disciplines and departments, and encourage transparency and communication within those segments.

- Invite different perspectives and encourage conversation: Leadership asked that each committee come back with seven bullet points summarizing what they learned and their recommendations. In the end, we got a comprehensive document that included many viewpoints and served as a jumping-off point for further discussion and refinement.

- Avoid top-down mandates: Telling your team they must use AI for x amount of hours per day isn’t very encouraging or useful. Instead, understand how AI tools are already being used and create policies that reflect how to be more efficient and effective while using them.

- Embrace the unknown: Things will change, quickly when it comes to AI. Make sure your policy is flexible and ever-evolving, not set in stone.

“The work is never done,” Meg Eulberg said. “We’re setting the principles now, but how we apply them will continue to evolve.”